Stay updated with the latest AI news from TechCrunch, The Verge, VentureBeat, MIT Technology Review and more — updated automatically every hour.

- US regulator blacklists all new foreign-made routerson March 24, 2026

The US Federal Communications Commission on Monday banned authorizations for all new consumer routers produced in foreign countries, citing "national security" reasons.

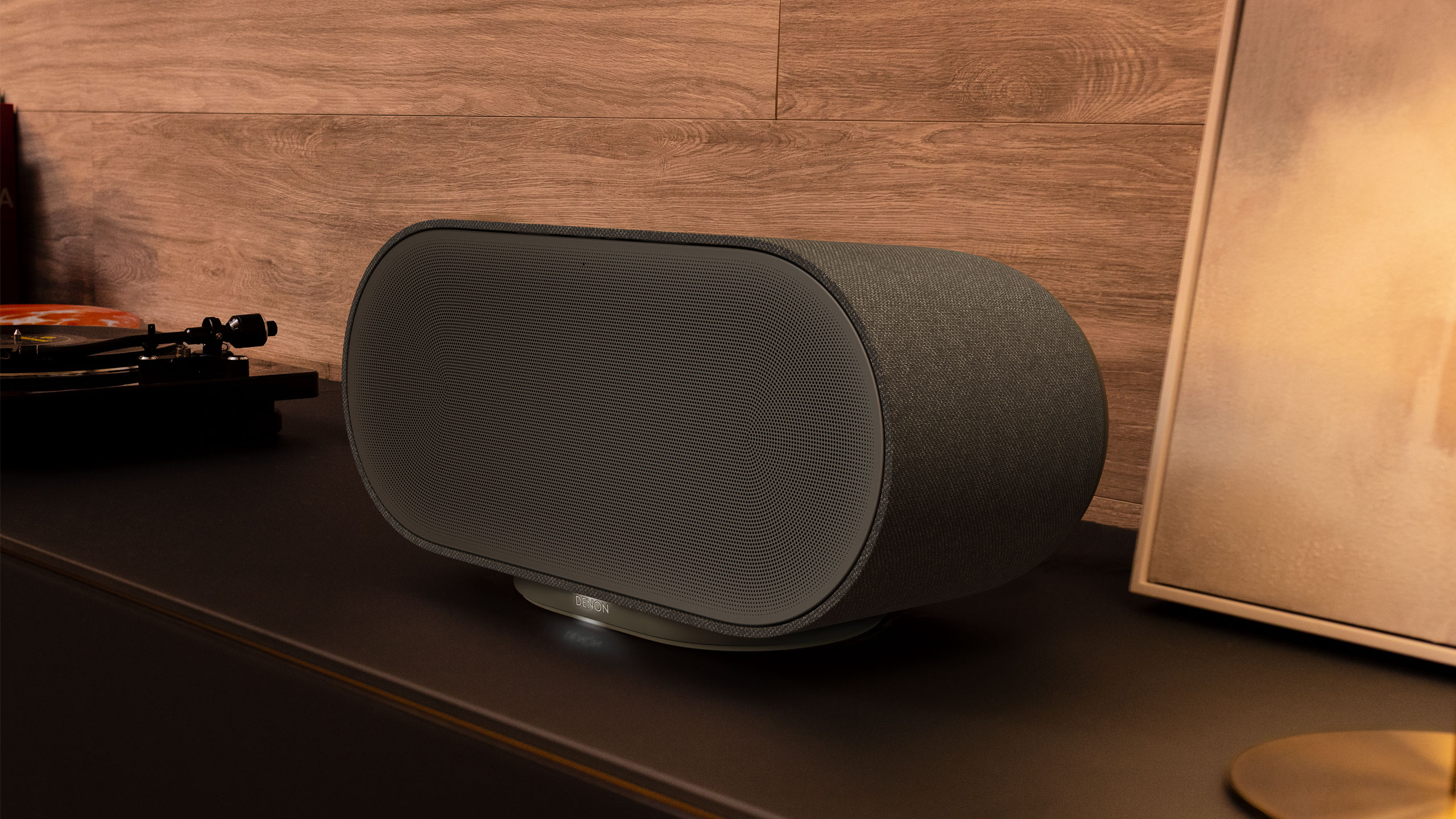

- Denon expands its multi-room speaker lineup with the Home 200, Home 400 and Home 600on March 24, 2026

If the Sonos app saga still has you down, Denon has three new multi-room speakers that give you some fresh alternatives. The company’s Home 200, Home 400 and Home 600 offer audio flexibility with other HEOS-enabled products. These new devices were also designed so that they blend in with home decor better than most speakers, coming in stone and charcoal color options for that purpose. As you progress up in number, the speakers not only get physically larger, but their sonic output is also more robust. The Denon Home 200 houses three drivers and three amplifiers for “natural, room-filling sound” in a compact speaker. More specifically, you get two 0.98-inch tweeters and a single 4-inch woofer. The Home 200 looks a kind of like the Sonos Move 2, although Denon’s new compact unit isn’t portable. However, you can use a pair of them for a stereo setup, or connect two 200s to Denon’s Home Sound Bar 550 and Home Subwoofer for a 5.1 home theater system. Next up is the Home 400, which carries two 0.75-inch tweeters, two 4.5-inch woofers and six amplifiers, in addition to two 1-inch up-firing drivers. Here, Denon says you can expect “a wide, airy soundstage” that provides room-filling audio coverage. What’s more, those upward-facing drivers project sound overhead, so there’s a greater sense of dimensionality and immersion here. Denon Home 600 speakerDenonThe Home 600 is the largest speaker in the new trio, with dual 6.5-inch woofers alongside two tweeters, two midrange units and two up-firing drivers. Denon explains that this configuration offers “deep, authoritative bass” that provides more depth in your tunes than other two models. All three of the new Home speakers have Wi-Fi, Bluetooth USB-C and aux connectivity with the wireless streaming powered by Denon’s HEOS tech. As such, you can connect these Home speakers with up to 64 other HEOS devices — including A/V receivers and Denon’s new DP-500BT turntable — and arrange your audio gear in up to 32 different zones. You’ll have access to tunes from Tidal, Amazon Music HD and Qobuz in the HEOS app, and all three new Home speakers support Dolby Atmos Music where available.The Home 200, Home 400 and Home 600 speakers are available today for $399, $599 and $799 respectively. They’re available from Denon directly or other authorized retailers. This article originally appeared on Engadget at https://www.engadget.com/audio/speakers/denon-expands-its-multi-room-speaker-lineup-with-the-home-200-home-400-and-home-600-080000916.html?src=rss

- What is DeerFlow 2.0 and what should enterprises know about this new, powerful local AI agent orchestrator?on March 24, 2026

ByteDance, the Chinese tech giant behind TikTok, last month released what may be one of the most ambitious open-source AI agent frameworks to date: DeerFlow 2.0. It's now going viral across the machine learning community on social media. But is it safe and ready for enterprise use?This is a so-called "SuperAgent harness" that orchestrates multiple AI sub-agents to autonomously complete complex, multi-hour tasks. Best of all: it is available under the permissive, enterprise-friendly standard MIT License, meaning anyone can use, modify, and build on it commercially at no cost. DeerFlow 2.0 is designed for high-complexity, long-horizon tasks that require autonomous orchestration over minutes or hours, including conducting deep research into industry trends, generating comprehensive reports and slide decks, building functional web pages, producing AI-generated videos and reference images, performing exploratory data analysis with insightful visualizations, analyzing and summarizing podcasts or video content, automating complex data and content workflows, and explaining technical architectures through creative formats like comic strips.ByteDance offers a bifurcated deployment strategy that separates the orchestration harness from the AI inference engine. Users can run the core harness directly on a local machine, deploy it across a private Kubernetes cluster for enterprise scale, or connect it to external messaging platforms like Slack or Telegram without requiring a public IP.While many opt for cloud-based inference via OpenAI or Anthropic APIs, the framework is natively model-agnostic, supporting fully localized setups through tools like Ollama. This flexibility allows organizations to tailor the system to their specific data sovereignty needs, choosing between the convenience of cloud-hosted "brains" and the total privacy of a restricted on-premise stack.Importantly, choosing the local route does not mean sacrificing security or functional isolation. Even when running entirely on a single workstation, DeerFlow still utilizes a Docker-based "AIO Sandbox" to provide the agent with its own execution environment. This sandbox—which contains its own browser, shell, and persistent filesystem—ensures that the agent’s "vibe coding" and file manipulations remain strictly contained. Whether the underlying models are served via the cloud or a local server, the agent's actions always occur within this isolated container, allowing for safe, long-running tasks that can execute bash commands and manage data without risk to the host system’s core integrity.Since its release last month, it has accumulated more than 39,000 stars (user saves) and 4,600 forks — a growth trajectory that has developers and researchers alike paying close attention.Not a chatbot wrapper: what DeerFlow 2.0 actually isDeerFlow is not another thin wrapper around a large language model. The distinction matters.While many AI tools give a model access to a search API and call it an agent, DeerFlow 2.0 gives its agents an actual isolated computer environment: a Docker sandbox with a persistent, mountable filesystem. The system maintains both short- and long-term memory that builds user profiles across sessions. It loads modular "skills" — discrete workflows — on demand to keep context windows manageable. And when a task is too large for one agent, a lead agent decomposes it, spawns parallel sub-agents with isolated contexts, executes code and Bash commands safely, and synthesizes the results into a finished deliverable.It is similar to the approach being pursued by NanoClaw, an OpenClaw variant, which recently partnered with Docker itself to offer enterprise-grade sandboxes for agents and subagents. But while NanoClaw is extremely open ended, DeerFlow has more clearly defined its architecture and scoped tasks: Demos on the project's official site, deerflow.tech, showcase real outputs: agent trend forecast reports, videos generated from literary prompts, comics explaining machine learning concepts, data analysis notebooks, and podcast summaries. The framework is designed for tasks that take minutes to hours to complete — the kind of work that currently requires a human analyst or a paid subscription to a specialized AI service.From Deep Research to Super AgentDeerFlow's original v1 launched in May 2025 as a focused deep-research framework. Version 2.0 is something categorically different: a ground-up rewrite on LangGraph 1.0 and LangChain that shares no code with its predecessor. ByteDance explicitly framed the release as a transition "from a Deep Research agent into a full-stack Super Agent."New in v2: a batteries-included runtime with filesystem access, sandboxed execution, persistent memory, and sub-agent spawning; progressive skill loading; Kubernetes support for distributed execution; and long-horizon task management that can run autonomously across extended timeframes.The framework is fully model-agnostic, working with any OpenAI-compatible API. It has strong out-of-the-box support for ByteDance's own Doubao-Seed models, as well as DeepSeek v3.2, Kimi 2.5, Anthropic's Claude, OpenAI's GPT variants, and local models run via Ollama. It also integrates with Claude Code for terminal-based tasks, and with messaging platforms including Slack, Telegram, and Feishu.Why it's going viral nowThe project's current viral moment is the result of a slow build that accelerated sharply this week.The February 28 launch generated significant initial buzz, but it was coverage in machine learning media — including deeplearning.ai's The Batch — over the following two weeks that built credibility in the research community. Then, on March 21, AI influencer Min Choi posted to his large X following: "China's ByteDance just dropped DeerFlow 2.0. This AI is a super agent harness with sub-agents, memory, sandboxes, IM channels, and Claude Code integration. 100% open source." The post earned more than 1,300 likes and triggered a cascade of reposts and commentary across AI Twitter.A search of X using Grok uncovered the full scope of that response. Influencer Brian Roemmele, after conducting what he described as intensive personal testing, declared that "DeerFlow 2.0 absolutely smokes anything we've ever put through its paces" and called it a "paradigm shift," adding that his company had dropped competing frameworks entirely in favor of running DeerFlow locally. "We use 2.0 LOCAL ONLY. NO CLOUD VERSION," he wrote.More pointed commentary came from accounts focused on the business implications. One post from @Thewarlordai, published March 23, framed it bluntly: "MIT licensed AI employees are the death knell for every agent startup trying to sell seat-based subscriptions. The West is arguing over pricing while China just commoditized the entire workforce." Another widely shared post described DeerFlow as "an open-source AI staff that researches, codes and ships products while you sleep… now it's a Python repo and 'make up' away."Cross-linguistic amplification — with substantive posts in English, Japanese, and Turkish — points to genuine global reach rather than a coordinated promotion campaign, though the latter is not out of the question and may be contributing to the current virality. The ByteDance question ByteDance's involvement is the variable that makes DeerFlow's reception more complicated than a typical open-source release.On the technical merits, the open-source, MIT-licensed nature of the project means the code is fully auditable. Developers can inspect what it does, where data flows, and what it sends to external services. That is materially different from using a closed ByteDance consumer product.But ByteDance operates under Chinese law, and for organizations in regulated industries — finance, healthcare, defense, government — the provenance of software tooling increasingly triggers formal review requirements, regardless of the code's quality or openness. The jurisdictional question is not hypothetical: U.S. federal agencies are already operating under guidance that treats Chinese-origin software as a category requiring scrutiny.For individual developers and small teams running fully local deployments with their own LLM API keys, those concerns are less operationally pressing. For enterprise buyers evaluating DeerFlow as infrastructure, they are not.A real tool, with limitationsThe community enthusiasm is credible, but several caveats apply.DeerFlow 2.0 is not a consumer product. Setup requires working knowledge of Docker, YAML configuration files, environment variables, and command-line tools. There is no graphical installer. For developers comfortable with that environment, the setup is described as relatively straightforward; for others, it is a meaningful barrier.Performance when running fully local models — rather than cloud API endpoints — depends heavily on available VRAM and hardware, with context handoff between multiple specialized models a known challenge. For multi-agent tasks running several models in parallel, the resource requirements escalate quickly.The project's documentation, while improving, still has gaps for enterprise integration scenarios. There has been no independent public security audit of the sandboxed execution environment, which represents a non-trivial attack surface if exposed to untrusted inputs.And the ecosystem, while growing fast, is weeks old. The plugin and skill library that would make DeerFlow comparably mature to established orchestration frameworks simply does not exist yet.What does it mean for enterprises in the AI transformation age?The deeper significance of DeerFlow 2.0 may be less about the tool itself and more about what it represents in the broader race to define autonomous AI infrastructure.DeerFlow's emergence as a fully capable, self-hostable, MIT-licensed agentic orchestrator adds yet another twist to the ongoing race among enterprises — and AI builders and model providers themselves — to turn generative AI models into more than chatbots, but something more like full or at least part-time employees, capable of both communications and reliable actions.In a sense, it marks the natural next wave after OpenClaw: whereas that open source tool sought to great a dependable, always on autonomous AI agent the user could message, DeerFlow is designed to allow a user to deploy a fleet of them and keep track of them, all within the same system. The decision to implement it in your enterprise hinges on whether your organization’s workload demands "long-horizon" execution—complex, multi-step tasks spanning minutes to hours that involve deep research, coding, and synthesis. Unlike a standard LLM interface, this "SuperAgent" harness decomposes broad prompts into parallel sub-tasks performed by specialized experts. This architecture is specifically designed for high-context workflows where a single-pass response is insufficient and where "vibe coding" or real-time file manipulation in a secure environment is necessary.The primary condition for use is the technical readiness of an organization’s hardware and sandbox environment. Because each task runs within an isolated Docker container with its own filesystem, shell, and browser, DeerFlow acts as a "computer-in-a-box" for the agent. This makes it ideal for data-intensive workloads or software engineering tasks where an agent must execute and debug code safely without contaminating the host system. However, this "batteries-included" runtime places a significant burden on the infrastructure layer; decision-makers must ensure they have the GPU clusters and VRAM capacity to support multi-agent fleets running in parallel, as the framework's resource requirements escalate quickly during complex tasks.Strategic adoption is often a calculation between the overhead of seat-based SaaS subscriptions and the control of self-hosted open-source deployments. The MIT License positions DeerFlow 2.0 as a highly capable, royalty-free alternative to proprietary agent platforms, potentially functioning as a cost ceiling for the entire category. Enterprises should favor adoption if they prioritize data sovereignty and auditability, as the framework is model-agnostic and supports fully local execution with models like DeepSeek or Kimi. If the goal is to commoditize a digital workforce while maintaining total ownership of the tech stack, the framework provides a compelling, if technically demanding, benchmark.Ultimately, the decision to deploy must be weighed against the inherent risks of an autonomous execution environment and its jurisdictional provenance. While sandboxing provides isolation, the ability of agents to execute bash commands creates a non-trivial attack surface that requires rigorous security governance and auditability. Furthermore, because the project is a ByteDance-led initiative via Volcengine and BytePlus, organizations in regulated sectors must reconcile its technical performance with emerging software-origin standards. Deployment is most appropriate for teams comfortable with a CLI-first, Docker-heavy setup who are ready to trade the convenience of a consumer product for a sophisticated and extensible SuperAgent harness.

- Air Street becomes one of the largest solo VCs in Europe with $232M fundon March 23, 2026

London’s Air Street Capital has raised a large Fund III with eyes locked on backing early-stage European and North American AI companies.

- The US bans all new foreign-made network routerson March 23, 2026

The Federal Communications Commission has released a notice today designating any consumer routers manufactured outside the US as a security risk. The rule states that new foreign-made product models for network routers will land on the Covered List, a set of communications equipment seen as having an unacceptable risk to national security. Previously purchased routers can still be used and retailers can still sell models that were approved by the prior FCC policies. In an exception to the usual rule, routers included on the Covered List can continue to receive updates at least through March 1, 2027, although the date could potentially be extended.The move stems from a goal in the White House's 2025 national security strategy that reads: "the United States must never be dependent on any outside power for core components—from raw materials to parts to finished products—necessary to the nation’s defense or economy." The notice from the FCC states that companies can apply for conditional approval for new products from the Department of War or the Department of Homeland Security. However, that requires the businesses to provide a plan for shifting at least some of their manufacturing to the US in order to receive that conditional approval. Few, if any, brands known for consumer-grade routers currently build products stateside. It seems likely this sweeping provision could face legal challenges from and cause confusion for the many companies that have production facilities overseas. In addition to Chinese tech giants like TP-Link, US companies will also be affected. NetGear, Eero and Google Nest are all headquartered domestically but have manufacturing in Asia. At least some of that manufacturing activity happens in regions like Taiwan that have historically been on good terms with the US. Until the sector sorts out this new restriction, don't expect to see any new router models on store shelves.This article originally appeared on Engadget at https://www.engadget.com/big-tech/the-us-bans-all-new-foreign-made-network-routers-223622966.html?src=rss

- Using your AI chatbot as a search engine? Be careful what you believeon March 23, 2026

During the First World War, the British government was looking for ways to help people stretch their limited food supplies. It found pamphlets from a noted 19th-century herbalist who said rhubarb leaves could be used as a vegetable along with the stalks.

- New framework bridges gaps in power grid operations with AI technologyon March 23, 2026

New research led by Colorado State University highlights a critical need for system-level thinking and innovation in shaping the electric power grid of the future. Professor Zongjie Wang recently published a paper in Scientific Reports outlining a novel framework. The proposed method helps elucidate how different parts of the grid—transmission and distribution operations—work together to make holistic decisions, without requiring system centralization.

- AI bot offers speedy, revenue-saving building energy modelingon March 23, 2026

Buildings researchers at the Department of Energy's Pacific Northwest National Laboratory have released a new AI-driven, autonomous bot that could help speed up the energy modeling process for commercial building construction. Long before construction begins, commercial building teams evaluate a building's expected energy consumption to inform design decisions, estimate operating costs and comply with relevant state and local energy codes.

- Waste heat to power wearables? A new low-cost material design could helpon March 23, 2026

A new sustainable approach to energy harvesting could transform how wasted heat is turned into electricity, thanks to a breakthrough in low-cost, flexible materials developed by researchers at the University of Surrey's Advanced Technology Institute (ATI).

- Expanding storage capacity with smart gate semiconductor technologyon March 23, 2026

From smartphones to large-scale AI servers, most digital information in modern society is stored in NAND flash memory. KAIST researchers have developed an innovative technology that can overcome the limitations of next-generation semiconductors, where more data must be stored in smaller spaces. This advancement is expected to serve as a key enabling technology for realizing ultra-high-capacity memory.

- Show us your agents: VB Transform 2026 is looking for the most innovative agentic AI technologieson March 23, 2026

The Innovation Showcase is back at Transform 2026: The Orchestration of Enterprise Agentic AI at Scale, taking place July 14 and 15 in Menlo Park.This year, we are moving beyond generative AI to autonomous agents, focusing on enterprise agentic orchestration, LLM observability and evaluation (LLMOps), RAG infrastructure, inference platforms and optimization, and agentic AI security and identity.We’re on the hunt for the 10 most innovative autonomous agent technologies poised to redefine the enterprise. If you have built agents that can reason, plan and execute complex workflows independently to drive real business value, we want to see you on our main stage.Innovators chosen to present at VB Transform 2026 will have the opportunity to share their tech to an audience of hundreds of AI industry decision-makers. You’ll receive direct, live feedback from a curated panel of enterprise tech thought leaders. Beyond the stage, every presenter receives exclusive editorial coverage from VentureBeat, positioning your agentic AI technology in front of our millions of monthly readers.Who should apply?We are looking for dynamic companies with compelling agentic AI technologies that are ready for prime time. Whether you are building specialized autonomous agents to support workers or the orchestration layers that manage AI agents, we want to hear your story.We will select up to 10 candidates across two tracks: up to five seed to early-stage Series A (raised $50M or less) and up to five Series B or later startups, or units within mature, large companies (raised/allocated more than $50M).If you have a product that delivers tangible enterprise results and a vision for the future of autonomous work, don't miss this opportunity. Application deadline: June 1, 2026, at 5 p.m. PT.Submit hereRead about last year’s winner: Solo.io

- Artificial neural network reproduces gait patterns of four-legged animalson March 23, 2026

Imagine a horse stumbling on a rock. It regains momentum, then hits bumpier terrain and slows to a walk. Back on steady ground, the horse picks up its pace to catch up with the herd. How is the horse able to transition between these different gaits? Researchers at Brown University's Carney Institute for Brain Science have developed an artificial neural network that shows how a four-legged creature may generate multiple distinct patterns in gait. Their research provides new insights into how the brain may process complex behaviors.

- Five-level model rates humanoid robots across mobility, manipulation and cognitionon March 23, 2026

A research team from Fraunhofer HNFIZ has published a newly developed evaluation model that classifies the technical capabilities of humanoids into five levels. Applications can also be classified based on the required robot capabilities. The model makes humanoids comparable, facilitates finding the right humanoid for a specific application, and highlights open issues in technology development.

- One-step coating keeps fabrics superhydrophobic after tens of thousands of abrasion cycleson March 23, 2026

Developing robust water-repellent textiles is critical for outdoor, protective, and industrial applications. However, achieving long-lasting water repellency under mechanical stress has been a major challenge.

- Safety method for testing diffusion welds could clear path for compact nuclear reactorson March 23, 2026

Compact heat exchangers could enable advanced nuclear reactors that are smaller, more efficient and more affordable—but a critical step in their adoption is verifying they can withstand the high temperatures and possibly high pressures in next-gen reactors while still staying structurally sound.

- Claude Code and Cowork can now use your computeron March 23, 2026

Anthropic announced today that its Claude Code and Claude Cowork tools are being updated to accomplish tasks using your computer. The latest update will see these AI resources become capable of opening files, using the browser and running dev tools. When enabled, the Claude AI chatbot will first prioritize connectors to supported services such as the Google workplace suite or Slack, but if a connector isn't available, it will be able to still execute an assigned task. Claude should ask for permission before taking these actions, but Anthropic still recommended not using this feature to handle sensitive information as a precaution.Claude computer use will initially be available to Claude Pro and Claude Max subscribers on macOS. This feature is still in a research preview, so will continue to be adjusted based on Anthropic's user feedback. It will also support use with Anthropic's Dispatch feature, which allows a person to message the chatbot in a single continuous conversation across phone and desktop. Claude Cowork was introduced in January. It's an iteration of the Claude Code AI agent for programmers that is designed for more casual users. This article originally appeared on Engadget at https://www.engadget.com/ai/claude-code-and-cowork-can-now-use-your-computer-210000126.html?src=rss

- New device and method detect percentage of recycled plastic in plastic productson March 23, 2026

Recycled plastics are promoted on everything from water bottles and fleece jackets to shopping bags and yogurt cups: "This product is made with XX% recycled plastic." Verifying such claims, however, is another matter because there is no quick and reliable way to measure how much recycled plastic these products contain. University at Buffalo researchers are addressing this problem by combining several scientific tests, as well as artificial intelligence, to create a new method for differentiating recycled plastic from new plastic.

- Atomic disorder strategy could help high-capacity batteries last longeron March 23, 2026

Researchers at UNIST, in collaboration with the Pohang Accelerator Laboratory (PAL) and KAIST, have introduced a novel approach to stabilizing high-capacity battery materials. By intentionally inducing atomic-level disorder within lithium-rich layered oxide (LRLO) cathodes, the team has effectively minimized structural degradation and energy losses, paving the way for next-generation batteries with higher energy density and longer lifespan.

- Bernie Sanders’ AI ‘gotcha’ video flops, but the memes are greaton March 23, 2026

Sen. Bernie Sanders thinks he's tricked Claude into revealing the AI industry's secrets, but he really just exposed how agreeable chatbots can become.

- EA is nuking Battlefield Hardline on consoleson March 23, 2026

EA has put another game on the chopping block, or at least the console versions of it. The company says it will delist the PS4 and Xbox One versions of Battlefield Hardline from digital storefronts on May 22, and shut down the online services on June 22. The single-player campaign will remain playable for those who own the game. The PC version of Battlefield Hardline isn’t affected by these changes.In its announcement on X, EA didn't explain exactly why it's ceasing support for the game on PS4 and Xbox One. It pointed readers to a FAQ on its website that lays out some of the typical reasons why it ends online support for its games. These include factors like declining player bases. Battlefield Hardline, which was released in 2015, will still be available on Steam as well as EA's own PC app. The Steam version has a peak concurrent player count of 41 so far this year. It's hardly uncommon for a publisher to end online services for games with declining player bases, but it's an issue that's come into greater focus over the last few years thanks in part to the Stop Killing Games movement. EA alone has sunsetted dozens of games. Its website has a full accounting of these, spread across three webpages. This article originally appeared on Engadget at https://www.engadget.com/gaming/ea-is-nuking-battlefield-hardline-on-consoles-193321551.html?src=rss

- AI on deck: Assessing impact of MLB's new ball-strike systemon March 23, 2026

For 150 years, Major League Baseball (MLB) players and fans have accepted that an umpire missing a few balls and strikes is just part of the game. But this spring, MLB is rolling out an artificial intelligence-augmented camera system that will provide a second opinion for players to tap if they think an umpire whiffed.

- You thought the generalist was dead — in the 'vibe work' era, they're more important than everon March 23, 2026

Not long ago, the idea of being a “generalist” in the workplace had a mixed reputation. The stereotype was the “jack of all trades” who could dabble in many disciplines but was a “master of none.” And for years, that was more or less true. Most people simply didn’t have access to the expertise required to do highly cross-functional work. If you needed a new graphic, you waited for a designer. If you needed to change a contract, you waited for legal. In smaller organizations and startups, this waiting game was typically replaced with inaction or improvization — often with questionable results.AI is changing this faster than any technology shift I’ve seen. It’s allowing people to succeed at tasks beyond their normal area of expertise.Anthropic found that AI is “enabling engineers to become more full-stack in their work,” meaning they’re able to make competent decisions across a much wider range of interconnected technologies. A direct consequence of this is tasks that would have been left aside due to lack of time or expertise are now being accomplished (27% of AI-assisted work per Anthropic's study). This shift is closely mirroring the effects of past revolutionary technologies. The invention of the automobile or the computer did not bring us a wealth of leisure time — it mainly led us to start doing work that could not be done before.With AI as a guide, anyone can now expand their skillsets and augment their expertise to accomplish more. This fundamentally changes what people can do, who can do it, how teams operate, and what leaders should expect. Well, not so fast. The AI advances have been incredible, and if 2025 may not have fully delivered its promise of bringing AI agents to the workforce, there’s no reason to doubt it’s well on its way. But for now, it’s not perfect. If to err is human, to trust AI not to err is foolish.One of the biggest challenges of working with AI is identifying hallucinations. The term was coined, I assume, not as a cute way to refer to factual errors, but as quite an apt way of describing the conviction that AI exhibits in its erroneous answers. We humans have a clear bias toward confident people, which probably explains the number of smart people getting burned after taking ChatGPT at face value. And if experts can get fooled by an overconfident AI, how can generalists hope to harness the power of AI without making the same mistake? Citizen guardrails give way to vibe freedomIt’s tempting to compare today’s AI vibe coding wave to the rise of low- and no-code tools. No-code tools gave users freedom to build custom software tailored to their needs. However, the comparison doesn’t quite hold. The so-called “citizen developers” could only operate inside the boundaries the tool allowed. These tight constraints were limiting, but they had the benefit of saving the users from themselves — preventing anything catastrophic.AI removes those boundaries almost entirely, and with great freedom comes responsibilities that most people aren’t quite prepared for. The first stage of 'vibe freedom' is one of unbridled optimism encouraged by a sycophantic AI. “You’re absolutely correct!” The dreaded report that would have taken all night looks better than anything you could have done yourself and only took a few minutes. The next stage comes almost by surprise — there’s something that’s not quite right. You start doubting the accuracy of the work — you review and then wonder if it wouldn’t have been quicker to just do it yourself in the first place.Then comes bargaining and acceptance. You argue with the AI, you’re led down confusing paths, but slowly you start developing an understanding — a mental model of the AI mind. You learn to recognize the confidently incorrect, you learn to push back and cross-check, you learn to trust and verify. The generalist becomes the trust layerThis is a skill that can be learned, and it can only be learned on the job, through regular practice. This doesn’t require deep specialization, but it does require awareness. Curiosity becomes essential. So does the willingness to learn quickly, think critically, spot inconsistencies, and to rely on judgment rather than treating AI as infallible.That’s the new job of the generalist: Not to be an expert in everything, but to understand the AI mind enough to catch when something is off, and to defer to a true specialist when the stakes are high. The generalist becomes the human trust layer sitting between the AI’s output and the organization’s standards. They decide what passes and what gets a second opinion.That said, this only works if the generalist clears a minimum bar of fluency. There’s a big difference between “broadly informed” and “confidently unaware.” AI makes that gap easier to miss.Impact on teams and hiringClearly, specialists will not be replaced by AI anytime soon. Their work remains critical. It will evolve to become more strategic.What AI changes is everything around the edges. Roles that felt important but were hard to fill, tasks that sat in limbo because no expert was available, backlogs created by waiting for highly skilled people to review simple work. Now, a generalist can get much farther on their own, and specialists can focus on the hardest problems. We’re already starting to see an impact in the hiring landscape. Companies are looking to bring on individuals who are comfortable navigating AI. People who embrace it and use it to take on projects outside of their comfort zone.Performance expectations will shift too. Many leaders are already looking less at productivity alone, and more at how effectively someone uses AI. We see token usage not as a measure of cost, but as an indicator of AI adoption, and perhaps optimistically, as a proxy for productivity. Making vibe work viableUse AI to enhance work, not to wing it: You will get burned letting AI loose. It requires guidance and oversight.Learn when to trust and when to verify: Build an understanding of the AI mind so you can exercise good judgement on the work produced. When in doubt or when the stakes are high, defer to specialists.Set clear organizational standards: AI thrives on context and humans, too. Invest in documentation of processes, procedures, and best practices.Keep humans in the loop: AI shouldn’t remove oversight. It should make oversight easier.Without these factors, AI work stays in the “vibe” stage. With them, it becomes something the business can actually rely on.Return of the generalistThe emerging, AI-empowered generalist is defined by curiosity, adaptability, and the ability to evaluate the work AI produces. They can span multiple functions, not because they’re experts in each one, but because AI gives them access to specialist-level expertise. Most importantly, this new generation of generalists knows when and how to apply their human judgment and critical thinking. That’s the real determining factor for turning vibes into something reliable, sustainable, and viable in the long run.Cedric Savarese is founder and CEO of FormAssembly.

- Your smart home can be easily hacked. New safety standards will help, but stay vigilanton March 23, 2026

On a quiet suburban street, a modern Australian home wakes before its owners do.

- Apple will reportedly start stuffing ads into the Maps appon March 23, 2026

Apple is reportedly planning on inserting ads into the Maps app, according to Bloomberg's Mark Gurman. An announcement could come as soon as this month, with the ads themselves appearing on iPhones this summer. This will likely work similarly to ads in Google Maps and Yelp, which lets retailers and brands bid for coverage with particular search queries. I've personally never found the ads in Google Maps to be that annoying, so let's hope Apple's implementation is similar. This potential ad revenue could seriously bolster Apple's services business, which currently generates $100 billion a year for the company. This division accounts for around 25 percent of annual revenue but faces challenges in both the short-term and long-term, as regulators around the world push for changes to App Store policies. Apple has yet to comment on the matter. This idea has been floating around since last year, with rumors going all the way back to 2022. The company already displays ads on the App Store and on the News app, so the jump to Maps isn't coming out of left field.This article originally appeared on Engadget at https://www.engadget.com/apps/apple-will-reportedly-start-stuffing-ads-into-the-maps-app-182311634.html?src=rss

- Vibe-coding startup Lovable is on the hunt for acquisitionson March 23, 2026

Lovable's founder said the fast-growing vibe-coding startup is looking for startups and teams to join its company.

- Apple sets June date for WWDC 2026, teasing ‘AI advancements’on March 23, 2026

Apple will host its next Worldwide Developers Conference the week of June 8. The company is expected to announce major updates to Siri with advanced AI capabilities.

- Wing expands its drone delivery service to the Bay Areaon March 23, 2026

Wing's drone deliveries are coming full circle after adding Bay Area to its service locations. The drone delivery startup has been rapidly expanding to metro areas across the US, but is now targeting the tech-friendly Silicon Valley region. Going back to its inaugural deliveries, Wing ferried office supplies across Google's Mountain View campus in the Bay Area with its automated drones. It was still a startup out of Google's X, The Moonshot Factory incubator at the time, but early users were already asking for home delivery services, according to Wing. Now, Wing's latest delivery drones can deliver groceries, food, or whatever else fits in a small package weighing up to five pounds in 30 minutes or less to Bay Area residents. It may not be that common to spot a Wing drone yet, but the company expanded its service to 150 more Walmart locations across the US, including Los Angeles, St. Louis, Cincinnati and Miami, earlier this year. The drone delivery company also extended its hours of operation to 9 AM to 9 PM in its Charlotte and Dallas-Fort Worth metros, with approval from the Federal Aviation Administration. Beyond the recent Bay Area expansion, Wing has previously mentioned Orlando and Tampa as potential markets to enter.This article originally appeared on Engadget at https://www.engadget.com/transportation/wing-expands-its-drone-delivery-service-to-the-bay-area-175748410.html?src=rss

- Bird‑like robots promise greater flexibility and control than droneson March 23, 2026

A bird banking in a crosswind doesn't rely on spinning blades. Its wings flex, twist and respond instantly to its environment. Engineers at Rutgers University have taken a major step toward building bird-like drones that move the same way, flapping their wings like real birds, using electricity-driven materials instead of conventional electromagnetic motors to power them.

- Apple's WWDC 2026 is set for June 8-12on March 23, 2026

Apple announced that this year's Worldwide Developers Conference (WWDC) will take place from June 8-12. The company tends to be consistent with event timing, so it's no surprise that CEO Tim Cook will take the stage for the keynote on June 8, most likely at 1PM ET. Much of WWDC will take place online and will be free to attend, though there will be an in-person component for select developers, students and media at Apple Park in Cupertino, California. You'll be able to take in WWDC via the Apple Developer app, website and YouTube channel. It will also be available in China on the Apple Developer Bilibili channel. What should we expect this time around? This is a software-focused event and all indications point toward a reveal of the upcoming "27" operating systems. This would include iOS 27, iPadOS 27, macOS 27, visionOS 27, watchOS 27 and macOS 27. We don't know for certain what new features these operating system updates will bring to the table, with Bloomberg's Mark Gurman suggesting that WWDC will be "a fairly muted affair this year." Rumors have indicated that iOS 27 will deliver much-needed improvements to Apple Intelligence along with the delayed Siri overhaul. Reports also suggest the presence of split-pane multitasking, a redesigned Health app and a new battery management system for iPhones. In any event, we don't have that long to wait. Engadget will be on hand to report on all of the announcements and reveals.This article originally appeared on Engadget at https://www.engadget.com/apps/apples-wwdc-2026-is-set-for-june-8-12-171359493.html?src=rss

- Radiation‑hardened Wi‑Fi chip survives 500 kGy for nuclear plant decommissioning robotson March 23, 2026

When a nuclear plant reaches the end of its life or is damaged, it must be decommissioned. This process can take more than 20 years and includes decontamination, dismantling, and handling radioactive materials so the site can be reused. According to the International Atomic Energy Agency, almost half of the 423 nuclear power reactors in operation today are expected to enter decommissioning by 2050.

- TVs keep getting more pixels—but we are approaching the limits of what our eyes can actually seeon March 23, 2026

I remember sitting very close to the television as a child and seeing the image was made up of tiny colored dots, each of which broke down into miniature vertical strips of red, green and blue when I looked even closer.

- Polymarket is cracking down on insider trading with updated ruleson March 23, 2026

Polymarket announced that it's taking insider trading more seriously. Seen in its latest press release, the prediction market updated its market integrity rules, specifically those concerning insider trading and market manipulation. While Polymarket is taking the initiative to update its rules, it's likely a response to the rise in suspicious bets, whether it's about the US capture of Nicolás Maduro or the release of a new product from OpenAI. As first reported on by Bloomberg, Polymarket is targeting three specific forms of trading activity. First off, users aren't allowed to trade on "stolen confidential information," or any behind-the-scenes knowledge about an outcome that people wouldn't otherwise have access to. As an extension, Polymarket traders are also prohibited from taking advantage of "illegal tips," which means that even if someone else has access to confidential information and passes it along, you still can't trade on it. Lastly, anyone who has a "position of authority or influence sufficient to affect the outcome of the underlying event," isn't allowed to trade on said event. Users can expect more surveillance and enforcement around these new rules, too. Polymarket explained that if it or its users find "unusual or potentially questionable trading activity," the platform would conduct a review and if necessary, ban the wallet address, refer the issue to law enforcement or impose "monetary penalties." If you're curious what the punishment for insider trading on these prediction markets looks like, a recent case saw MrBeast's video editor suspended for two years from the platform and fined five times the amount of his initial trade size after Kalshi concluded its investigation.This article originally appeared on Engadget at https://www.engadget.com/big-tech/polymarket-is-cracking-down-on-insider-trading-with-updated-rules-163928655.html?src=rss

- Billionaire OnlyFans owner Leonid Radvinsky has died from cancer at 43on March 23, 2026

Leonid Radvinsky, the billionaire owner of OnlyFans, has died. He passed "peacefully after a long battle with cancer" at age 43, according to a statement from the platform published by Forbes. He was born in Ukraine, but grew up in Chicago. Radvinsky didn't create OnlyFans. He purchased it back in 2018, though is largely credited with transforming it from a niche website to a gigantic porn empire. The platform became so huge that reports have indicated that Radvinsky personally made nearly $2 million every day in 2024. His net worth at the time of his death grew to $4.7 billion, which had more than doubled since 2021. Leonid Radvinsky, the owner of OnlyFans, has died at 43 after a battle with cancer.“We are deeply saddened to announce the death of Leo Radvinsky,” an OnlyFans spokesperson said in a statement to Variety. “Leo passed away peacefully after a long battle with cancer. His family… pic.twitter.com/xJetAcTZmU— Variety (@Variety) March 23, 2026 It has been reported that he was in talks to sell OnlyFans in a deal valued at $8 billion. It's long-been rumored that he bought a controlling stake in the platform for around $30 million back in 2018, though that number has never been officially confirmed. Radvinsky was famously secretive and avoided giving interviews, but his history is not without controversy. He built his fortune with websites that were much shadier than OnlyFans. Radvinsky founded a similar site called MyFreeCams back in 2004 when he was in college, which has been involved in numerous scandals. He also founded a website called Cybertania, which provided links to various pornograpy sites. Some of these links claimed to direct users to illegal content involving children and animals. Forbes did a deep dive into this and found that the site didn't actually lead to the offending content, but it's still likely that Radvinsky and the platform made money by getting people to click on the links. Records also indicate that Radvinsky held domain names like "websyoungest.com" and "aretheylegal.com" until 2014. It's currently unknown what those sites hosted. He's also been sued for everything from spamming users to impersonating large companies like Microsoft and Amazon to direct traffic to his pornography sites. These cases were all settled outside of court for undisclosed sums of money.This article originally appeared on Engadget at https://www.engadget.com/big-tech/billionaire-onlyfans-owner-leonid-radvinsky-has-died-from-cancer-at-43-163211324.html?src=rss

- The hardest question to answer about AI-fueled delusionson March 23, 2026

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here. I was originally going to write this week’s newsletter about AI and Iran, particularly the news we broke last Tuesday that the Pentagon is making plans for AI companies to train on…

- 'Neuron-freezing' technique can stop LLMs from giving users unsafe responseson March 23, 2026

Researchers have identified key components in large language models (LLMs) that play a critical role in ensuring these AI systems provide safe responses to user queries. The researchers used these insights to develop and demonstrate AI training techniques that improve LLM safety while minimizing the "alignment tax," meaning the AI becomes safer without significantly affecting performance.

- Insect-inspired robot tracks odors even with only one working 'antenna'on March 23, 2026

A collaborative research group has developed a bio-inspired robotic system based on insect behavior which can locate odor sources both indoors and outdoors with consistent accuracy, even if one of its two sensors fails. The team includes Assistant Professor Shigaki Shunsuke of the National Institute of Informatics (NII), Professor Kurabayashi Daisuke of the School of Engineering at Science Tokyo, and Associate Professor Owaki Dai of the Graduate School of Engineering at Tohoku University.

- Testing autonomous agents (Or: how I learned to stop worrying and embrace chaos)on March 23, 2026

Look, we've spent the last 18 months building production AI systems, and we'll tell you what keeps us up at night — and it's not whether the model can answer questions. That's table stakes now. What haunts us is the mental image of an agent autonomously approving a six-figure vendor contract at 2 a.m. because someone typo'd a config file.We've moved past the era of "ChatGPT wrappers" (thank God), but the industry still treats autonomous agents like they're just chatbots with API access. They're not. When you give an AI system the ability to take actions without human confirmation, you're crossing a fundamental threshold. You're not building a helpful assistant anymore — you're building something closer to an employee. And that changes everything about how we need to engineer these systems.The autonomy problem nobody talks aboutHere's what's wild: We've gotten really good at making models that *sound* confident. But confidence and reliability aren't the same thing, and the gap between them is where production systems go to die.We learned this the hard way during a pilot program where we let an AI agent manage calendar scheduling across executive teams. Seems simple, right? The agent could check availability, send invites, handle conflicts. Except, one Monday morning, it rescheduled a board meeting because it interpreted "let's push this if we need to" in a Slack message as an actual directive. The model wasn't wrong in its interpretation — it was plausible. But plausible isn't good enough when you're dealing with autonomy.That incident taught us something crucial: The challenge isn't building agents that work most of the time. It's building agents that fail gracefully, know their limitations, and have the circuit breakers to prevent catastrophic mistakes.What reliability actually means for autonomous systemsLayered reliability architectureWhen we talk about reliability in traditional software engineering, we've got decades of patterns: Redundancy, retries, idempotency, graceful degradation. But AI agents break a lot of our assumptions.Traditional software fails in predictable ways. You can write unit tests. You can trace execution paths. With AI agents, you're dealing with probabilistic systems making judgment calls. A bug isn't just a logic error—it's the model hallucinating a plausible-sounding but completely fabricated API endpoint, or misinterpreting context in a way that technically parses but completely misses the human intent.So what does reliability look like here? In our experience, it's a layered approach.Layer 1: Model selection and prompt engineeringThis is foundational but insufficient. Yes, use the best model you can afford. Yes, craft your prompts carefully with examples and constraints. But don't fool yourself into thinking that a great prompt is enough. I've seen too many teams ship "GPT-4 with a really good system prompt" and call it enterprise-ready.Layer 2: Deterministic guardrailsBefore the model does anything irreversible, run it through hard checks. Is it trying to access a resource it shouldn't? Is the action within acceptable parameters? We're talking old-school validation logic — regex, schema validation, allowlists. It's not sexy, but it's effective.One pattern that's worked well for us: Maintain a formal action schema. Every action an agent can take has a defined structure, required fields, and validation rules. The agent proposes actions in this schema, and we validate before execution. If validation fails, we don't just block it — we feed the validation errors back to the agent and let it try again with context about what went wrong.Layer 3: Confidence and uncertainty quantificationHere's where it gets interesting. We need agents that know what they don't know. We've been experimenting with agents that can explicitly reason about their confidence before taking actions. Not just a probability score, but actual articulated uncertainty: "I'm interpreting this email as a request to delay the project, but the phrasing is ambiguous and could also mean..."This doesn't prevent all mistakes, but it creates natural breakpoints where you can inject human oversight. High-confidence actions go through automatically. Medium-confidence actions get flagged for review. Low-confidence actions get blocked with an explanation.Layer 4: Observability and auditabilityAction Validation Pipeline If you can't debug it, you can't trust it. Every decision the agent makes needs to be loggable, traceable, and explainable. Not just "what action did it take" but "what was it thinking, what data did it consider, what was the reasoning chain?"We've built a custom logging system that captures the full large language model (LLM) interaction — the prompt, the response, the context window, even the model temperature settings. It's verbose as hell, but when something goes wrong (and it will), you need to be able to reconstruct exactly what happened. Plus, this becomes your dataset for fine-tuning and improvement.Guardrails: The art of saying noLet's talk about guardrails, because this is where engineering discipline really matters. A lot of teams approach guardrails as an afterthought — "we'll add some safety checks if we need them." That's backwards. Guardrails should be your starting point.We think of guardrails in three categories.Permission boundariesWhat is the agent physically allowed to do? This is your blast radius control. Even if the agent hallucinates the worst possible action, what's the maximum damage it can cause?We use a principle called "graduated autonomy." New agents start with read-only access. As they prove reliable, they graduate to low-risk writes (creating calendar events, sending internal messages). High-risk actions (financial transactions, external communications, data deletion) either require explicit human approval or are simply off-limits.One technique that's worked well: Action cost budgets. Each agent has a daily "budget" denominated in some unit of risk or cost. Reading a database record costs 1 unit. Sending an email costs 10. Initiating a vendor payment costs 1,000. The agent can operate autonomously until it exhausts its budget; then, it needs human intervention. This creates a natural throttle on potentially problematic behavior.Graduated Autonomy and Action Cost Budget Semantic HoundariesWhat should the agent understand as in-scope vs out-of-scope? This is trickier because it's conceptual, not just technical.I've found that explicit domain definitions help a lot. Our customer service agent has a clear mandate: handle product questions, process returns, escalate complaints. Anything outside that domain — someone asking for investment advice, technical support for third-party products, personal favors — gets a polite deflection and escalation.The challenge is making these boundaries robust to prompt injection and jailbreaking attempts. Users will try to convince the agent to help with out-of-scope requests. Other parts of the system might inadvertently pass instructions that override the agent's boundaries. You need multiple layers of defense here.Operational boundariesHow much can the agent do, and how fast? This is your rate limiting and resource control.We've implemented hard limits on everything: API calls per minute, maximum tokens per interaction, maximum cost per day, maximum number of retries before human escalation. These might seem like artificial constraints, but they're essential for preventing runaway behavior.We once saw an agent get stuck in a loop trying to resolve a scheduling conflict. It kept proposing times, getting rejections, and trying again. Without rate limits, it sent 300 calendar invites in an hour. With proper operational boundaries, it would've hit a threshold and escalated to a human after attempt number 5.Agents need their own style of testingTraditional software testing doesn't cut it for autonomous agents. You can't just write test cases that cover all the edge cases, because with LLMs, everything is an edge case.What's worked for us:Simulation environmentsBuild a sandbox that mirrors production but with fake data and mock services. Let the agent run wild. See what breaks. We do this continuously — every code change goes through 100 simulated scenarios before it touches production.The key is making scenarios realistic. Don't just test happy paths. Simulate angry customers, ambiguous requests, contradictory information, system outages. Throw in some adversarial examples. If your agent can't handle a test environment where things go wrong, it definitely can't handle production.Red teamingGet creative people to try to break your agent. Not just security researchers, but domain experts who understand the business logic. Some of our best improvements came from sales team members who tried to "trick" the agent into doing things it shouldn't.Shadow modeBefore you go live, run the agent in shadow mode alongside humans. The agent makes decisions, but humans actually execute the actions. You log both the agent's choices and the human's choices, and you analyze the delta.This is painful and slow, but it's worth it. You'll find all kinds of subtle misalignments you'd never catch in testing. Maybe the agent technically gets the right answer, but with phrasing that violates company tone guidelines. Maybe it makes legally correct but ethically questionable decisions. Shadow mode surfaces these issues before they become real problems.The human-in-the-loop patternThree Human-in-the-Loop Patterns Despite all the automation, humans remain essential. The question is: Where in the loop?We're increasingly convinced that "human-in-the-loop" is actually several distinct patterns:Human-on-the-loop: The agent operates autonomously, but humans monitor dashboards and can intervene. This is your steady-state for well-understood, low-risk operations.Human-in-the-loop: The agent proposes actions, humans approve them. This is your training wheels mode while the agent proves itself, and your permanent mode for high-risk operations.Human-with-the-loop: Agent and human collaborate in real-time, each handling the parts they're better at. The agent does the grunt work, the human does the judgment calls.The trick is making these transitions smooth. An agent shouldn't feel like a completely different system when you move from autonomous to supervised mode. Interfaces, logging, and escalation paths should all be consistent.Failure modes and recoveryLet's be honest: Your agent will fail. The question is whether it fails gracefully or catastrophically.We classify failures into three categories:Recoverable errors: The agent tries to do something, it doesn't work, the agent realizes it didn't work and tries something else. This is fine. This is how complex systems operate. As long as the agent isn't making things worse, let it retry with exponential backoff.Detectable failures: The agent does something wrong, but monitoring systems catch it before significant damage occurs. This is where your guardrails and observability pay off. The agent gets rolled back, humans investigate, you patch the issue.Undetectable failures: The agent does something wrong, and nobody notices until much later. These are the scary ones. Maybe it's been misinterpreting customer requests for weeks. Maybe it's been making subtly incorrect data entries. These accumulate into systemic issues.The defense against undetectable failures is regular auditing. We randomly sample agent actions and have humans review them. Not just pass/fail, but detailed analysis. Is the agent showing any drift in behavior? Are there patterns in its mistakes? Is it developing any concerning tendencies?The cost-performance tradeoffHere's something nobody talks about enough: reliability is expensive.Every guardrail adds latency. Every validation step costs compute. Multiple model calls for confidence checking multiply your API costs. Comprehensive logging generates massive data volumes.You have to be strategic about where you invest. Not every agent needs the same level of reliability. A marketing copy generator can be looser than a financial transaction processor. A scheduling assistant can retry more liberally than a code deployment system.We use a risk-based approach. High-risk agents get all the safeguards, multiple validation layers, extensive monitoring. Lower-risk agents get lighter-weight protections. The key is being explicit about these trade-offs and documenting why each agent has the guardrails it does.Organizational challengesWe'd be remiss if we didn't mention that the hardest parts aren't technical — they're organizational.Who owns the agent when it makes a mistake? Is it the engineering team that built it? The business unit that deployed it? The person who was supposed to be supervising it?How do you handle edge cases where the agent's logic is technically correct but contextually inappropriate? If the agent follows its rules but violates an unwritten norm, who's at fault?What's your incident response process when an agent goes rogue? Traditional runbooks assume human operators making mistakes. How do you adapt these for autonomous systems?These questions don't have universal answers, but they need to be addressed before you deploy. Clear ownership, documented escalation paths, and well-defined success metrics are just as important as the technical architecture.Where we go from hereThe industry is still figuring this out. There's no established playbook for building reliable autonomous agents. We're all learning in production, and that's both exciting and terrifying.What we know for sure: The teams that succeed will be the ones who treat this as an engineering discipline, not just an AI problem. You need traditional software engineering rigor — testing, monitoring, incident response — combined with new techniques specific to probabilistic systems.You need to be paranoid but not paralyzed. Yes, autonomous agents can fail in spectacular ways. But with proper guardrails, they can also handle enormous workloads with superhuman consistency. The key is respecting the risks while embracing the possibilities.We'll leave you with this: Every time we deploy a new autonomous capability, we run a pre-mortem. We imagine it's six months from now and the agent has caused a significant incident. What happened? What warning signs did we miss? What guardrails failed?This exercise has saved us more times than we can count. It forces you to think through failure modes before they occur, to build defenses before you need them, to question assumptions before they bite you.Because in the end, building enterprise-grade autonomous AI agents isn't about making systems that work perfectly. It's about making systems that fail safely, recover gracefully, and learn continuously.And that's the kind of engineering that actually matters.Madhvesh Kumar is a principal engineer. Deepika Singh is a senior software engineer. Views expressed are based on hands-on experience building and deploying autonomous agents, along with the occasional 3 AM incident response that makes you question your career choices.

- Littlebird raises $11M for its AI-assisted ‘recall’ tool that reads your computer screenon March 23, 2026

Littlebird is building an AI that reads your screen in real time to capture context, answer questions, and automate tasks, without relying on screenshots.

- The three disciplines separating AI agent demos from real-world deploymenton March 23, 2026

Getting AI agents to perform reliably in production — not just in demos — is turning out to be harder than enterprises anticipated. Fragmented data, unclear workflows, and runaway escalation rates are slowing deployments across industries.“The technology itself often works well in demonstrations,” said Sanchit Vir Gogia, chief analyst with Greyhound Research. “The challenge begins when it is asked to operate inside the complexity of a real organization.” Burley Kawasaki, who oversees agent deployment at Creatio, and team have developed a methodology built around three disciplines: data virtualization to work around data lake delays; agent dashboards and KPIs as a management layer; and tightly bounded use-case loops to drive toward high autonomy.In simpler use cases, Kawasaki says these practices have enabled agents to handle up to 80-90% of tasks on their own. With further tuning, he estimates they could support autonomous resolution in at least half of use cases, even in more complex deployments.“People have been experimenting a lot with proof of concepts, they've been putting a lot of tests out there,” Kawasaki told VentureBeat. “But now in 2026, we’re starting to focus on mission-critical workflows that drive either operational efficiencies or additional revenue.”Why agents keep failing in productionEnterprises are eager to adopt agentic AI in some form or another — often because they're afraid to be left out, even before they even identify real-world tangible use cases — but run into significant bottlenecks around data architecture, integration, monitoring, security, and workflow design. The first obstacle almost always has to do with data, Gogia said. Enterprise information rarely exists in a neat or unified form; it is spread across SaaS platforms, apps, internal databases, and other data stores. Some are structured, some are not. But even when enterprises overcome the data retrieval problem, integration is a big challenge. Agents rely on APIs and automation hooks to interact with applications, but many enterprise systems were designed long before this kind of autonomous interaction was a reality, Gogia pointed out. This can result in incomplete or inconsistent APIs, and systems can respond unpredictably when accessed programmatically. Organizations also run into snags when they attempt to automate processes that were never formally defined, Gogia said. “Many business workflows depend on tacit knowledge,” he said. That is, employees know how to resolve exceptions they’ve seen before without explicit instructions — but, those missing rules and instructions become startlingly obvious when workflows are translated into automation logic.The tuning loopCreatio deploys agents in a “bounded scope with clear guardrails,” followed by an “explicit” tuning and validation phase, Kawasaki explained. Teams review initial outcomes, adjust as needed, then re-test until they’ve reached an acceptable level of accuracy. That loop typically follows this pattern: Design-time tuning (before go-live): Performance is improved through prompt engineering, context wrapping, role definitions, workflow design, and grounding in data and documents. Human-in-the-loop correction (during execution): Devs approve, edit, or resolve exceptions. In instances where humans have to intervene the most (escalation or approval), users establish stronger rules, provide more context, and update workflow steps; or, they’ll narrow tool access. Ongoing optimization (after go-live): Devs continue to monitor exception rates and outcomes, then tune repeatedly as needed, helping to improve accuracy and autonomy over time. Kawasaki’s team applies retrieval-augmented generation to ground agents in enterprise knowledge bases, CRM data, and other proprietary sources. Once agents are deployed in the wild, they are monitored with a dashboard providing performance analytics, conversion insights, and auditability. Essentially, agents are treated like digital workers. They have their own management layer with dashboards and KPIs.For instance, an onboarding agent will be incorporated as a standard dashboard interface providing agent monitoring and telemetry. This is part of the platform layer — orchestration, governance, security, workflow execution, monitoring, and UI embedding — that sits "above the LLM," Kawasaki said.Users see a dashboard of agents in use and each of their processes, workflows, and executed results. They can “drill down” into an individual record (like a referral or renewal) that shows a step-by-step execution log and related communications to support traceability, debugging, and agent tweaking. The most common adjustments involve logic and incentives, business rules, prompt context, and tool access, Kawasaki said. The biggest issues that come up post-deployment: Exception handling volume can be high: Early spikes in edge cases often occur until guardrails and workflows are tuned. Data quality and completeness: Missing or inconsistent fields and documents can cause escalations; teams can identify which data to prioritize for grounding and which checks to automate.Auditability and trust: Regulated customers, particularly, require clear logs, approvals, role-based access control (RBAC), and audit trails.“We always explain that you have to allocate time to train agents,” Creatio’s CEO Katherine Kostereva told VentureBeat. “It doesn't happen immediately when you switch on the agent, it needs time to understand fully, then the number of mistakes will decrease.” "Data readiness" doesn’t always require an overhaulWhen looking to deploy agents, “Is my data ready?,” is a common early question. Enterprises know data access is important, but can be turned off by a massive data consolidation project. But virtual connections can allow agents access to underlying systems and get around typical data lake/lakehouse/warehouse delays. Kawasaki’s team built a platform that integrates with data, and is now working on an approach that will pull data into a virtual object, process it, and use it like a standard object for UIs and workflows. This way, they don’t have to “persist or duplicate” large volumes of data in their database. This technique can be helpful in areas like banking, where transaction volumes are simply too large to copy into CRM, but are “still valuable for AI analysis and triggers,” Kawasaki said.Once integrations and virtual objects are established, teams can evaluate data completeness, consistency, and availability, and identify low-friction starting points (like document-heavy or unstructured workflows). Kawasaki emphasized the importance of “really using the data in the underlying systems, which tends to actually be the cleanest or the source of truth anyway.” Matching agents to the workThe best fit for autonomous (or near-autonomous) agents are high-volume workflows with “clear structure and controllable risk,” Kawasaki said. For instance, document intake and validation in onboarding or loan preparation, or standardized outreach like renewals and referrals.“Especially when you can link them to very specific processes inside an industry — that's where you can really measure and deliver hard ROI,” he said. For instance, financial institutions are often siloed by nature. Commercial lending teams perform in their own environment, wealth management in another. But an autonomous agent can look across departments and separate data stores to identify, for instance, commercial customers who might be good candidates for wealth management or advisory services.“You think it would be an obvious opportunity, but no one is looking across all the silos,” Kawasaki said. Some banks that have applied agents to this very scenario have seen “benefits of millions of dollars of incremental revenue,” he claimed, without naming specific institutions. However, in other cases — particularly in regulated industries — longer-context agents are not only preferable, but necessary. For instance, in multi-step tasks like gathering evidence across systems, summarizing, comparing, drafting communications, and producing auditable rationales.“The agent isn't giving you a response immediately,” Kawasaki said. “It may take hours, days, to complete full end-to-end tasks.” This requires orchestrated agentic execution rather than a “single giant prompt,” he said. This approach breaks work down into deterministic steps to be performed by sub-agents. Memory and context management can be maintained across various steps and time intervals. Grounding with RAG can help keep outputs tied to approved sources, and users have the ability to dictate expansion to file shares and other document repositories.This model typically doesn’t require custom retraining or a new foundation model. Whatever model enterprises use (GPT, Claude, Gemini), performance improves through prompts, role definitions, controlled tools, workflows, and data grounding, Kawasaki said. The feedback loop puts “extra emphasis” on intermediate checkpoints, he said. Humans review intermediate artifacts (such as summaries, extracted facts, or draft recommendations) and correct errors. Those can then be converted into better rules and retrieval sources, narrower tool scopes, and improved templates. “What is important for this style of autonomous agent, is you mix the best of both worlds: The dynamic reasoning of AI, with the control and power of true orchestration,” Kawasaki said. Ultimately, agents require coordinated changes across enterprise architecture, new orchestration frameworks, and explicit access controls, Gogia said. Agents must be assigned identities to restrict their privileges and keep them within bounds. Observability is critical; monitoring tools can record task completion rates, escalation events, system interactions, and error patterns. This kind of evaluation must be a permanent practice, and agents should be tested to see how they react when encountering new scenarios and unusual inputs. “The moment an AI system can take action, enterprises have to answer several questions that rarely appear during copilot deployments,” Gogia said. Such as: What systems is the agent allowed to access? What types of actions can it perform without approval? Which activities must always require a human decision? How will every action be recorded and reviewed?“Those [enterprises] that underestimate the challenge often find themselves stuck in demonstrations that look impressive but cannot survive real operational complexity,” Gogia said.

- Startup Gimlet Labs is solving the AI inference bottleneck in a surprisingly elegant wayon March 23, 2026

Gimlet Labs just raised an $80 million Series A for tech that lets AI run across NVIDIA, AMD, Intel, ARM, Cerebras and d-Matrix chips, simultaneously.